6 key types of ecommerce websites for SMB success

April 14, 2026

How to optimise web content for digital marketing success

April 16, 2026

TL;DR:

- AI accelerates code development faster than security reviews, increasing vulnerabilities and security debt. SMBs face higher risks from unreviewed AI-generated code, supply chain issues, and AI-driven attacks. Implementing automated security measures and governance can help mitigate these growing threats.

AI is creating code faster than security teams can review it, and the consequences for European SMBs are serious. Rapid AI progress accelerates software development velocity, producing more code with vulnerabilities faster than teams can remediate. In fact, 82% of companies now carry unresolved security debt, meaning flaws that have been identified but never fixed. The common assumption is that AI tools also accelerate security fixes. The reality is more complicated. AI speeds up creation far more reliably than it speeds up remediation, leaving a widening gap between the vulnerabilities that appear in your applications and the ones your team actually closes.

Table of Contents

- How rapid AI accelerates both development and vulnerabilities

- Understanding the new types of AI-driven security flaws

- How attackers use AI to exploit security gaps faster

- Practical steps for closing app security gaps in the age of AI

- A smarter mindset: Why velocity metrics and nuanced governance are vital

- Take your app security and digital transformation further

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI speeds amplify risks | AI accelerates app creation but creates more vulnerabilities than teams can resolve, increasing overall risk. |

| New flaw types emerge | AI-generated code introduces unique security flaws such as slopsquatting and complex dependencies. |

| Attackers exploit faster | AI enables threat actors to automate attacks, leverage old vulnerabilities, and increase phishing dramatically. |

| Actionable protection steps | SMBs must embed security practices in their development lifecycle, use scanning tools, and ensure continuous monitoring. |

| Risk velocity matters most | Tracking the rate of vulnerability creation versus remediation is essential for staying ahead of AI-driven threats. |

How rapid AI accelerates both development and vulnerabilities

Building on the introduction, let’s examine how AI intensifies both productivity and security exposure. AI-assisted development tools such as GitHub Copilot, Amazon CodeWhisperer, and similar assistants allow developers to produce working code at a pace that would have been unimaginable five years ago. For SMBs, this is genuinely exciting. Faster development means faster product launches, lower costs, and quicker iteration. But speed has a hidden cost.

The Veracode SOSS report consistently shows that application security debt is rising across organisations of all sizes. When developers ship code faster, security reviews struggle to keep pace. Vulnerabilities accumulate. Teams patch the most critical issues and defer the rest, and that deferred work becomes security debt.

AI code outputs carry a 25 to 45% rate of vulnerable code, depending on the model and context. That is not a marginal risk. For a business deploying AI-assisted development across multiple projects, this means a significant proportion of new code may contain exploitable flaws from day one.

| Metric | Traditional development | AI-assisted development |

|---|---|---|

| Code output speed | Moderate | Very high |

| Vulnerability introduction rate | Lower | 25 to 45% of outputs |

| Security review capacity | Matched to pace | Often insufficient |

| Security debt accumulation | Gradual | Rapid |

The risks for European SMBs are particularly acute because many operate with small IT teams or outsourced development, and neither model scales well against this pace of vulnerability creation. Key risks include:

- Unreviewed code entering production environments without security sign-off

- Developers relying on AI suggestions without understanding the security implications

- Accumulated vulnerabilities creating large attack surfaces over time

- Compliance exposure under GDPR and NIS2 when breaches occur

- Limited internal capacity to triage and prioritise remediation

Understanding AI marketing challenges is one thing, but the security dimension of AI adoption is where many SMBs are genuinely underprepared. Choosing the best AI tools for your business requires factoring in their security footprint, not just their feature list.

“The velocity of AI-assisted development has fundamentally changed the risk equation. Organisations are no longer managing a slow accumulation of vulnerabilities. They are managing a flood.”

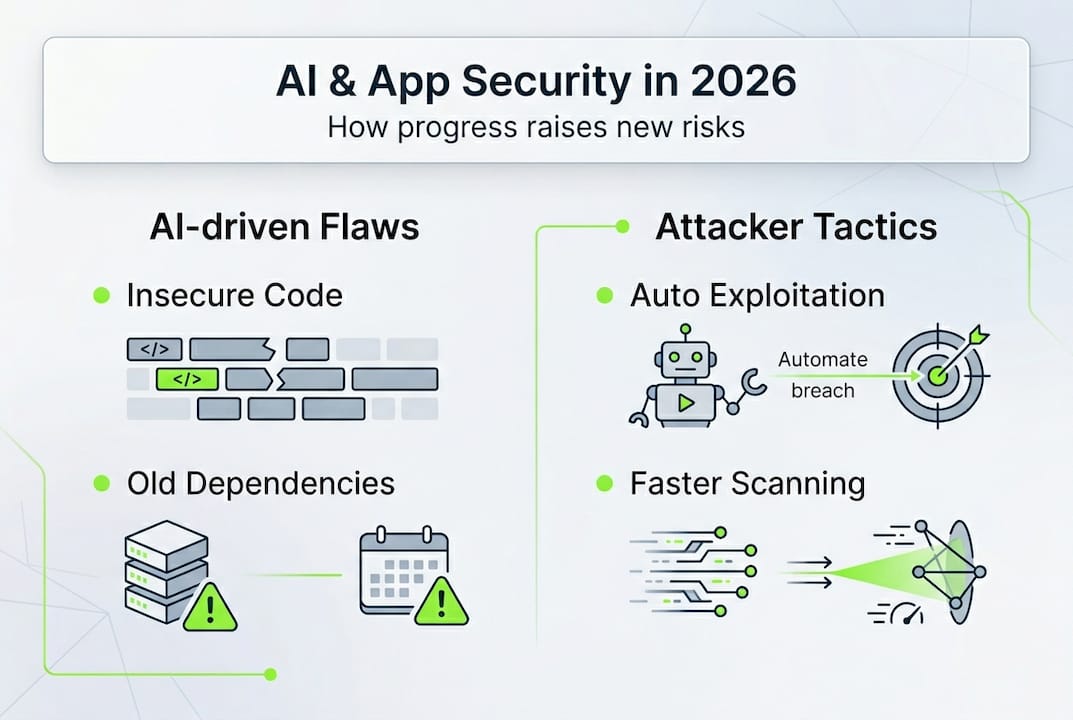

Understanding the new types of AI-driven security flaws

As vulnerabilities rise, it’s vital to explore how AI creates new forms of security risks for SMBs. AI-generated code does not invent flaws from scratch. It learns from vast repositories of existing code, much of which contains insecure patterns. When an AI model reproduces those patterns, it carries the same weaknesses forward, often without any visible warning to the developer.

AI-generated code introduces specific security flaws including insecure pattern reproduction, outdated dependencies, and complexity that makes subsequent fixes harder. One of the more surprising risks is a phenomenon called slopsquatting. This occurs when an AI model recommends a software package that does not actually exist, and an attacker registers that package name with malicious code inside it. Developers who follow the AI’s suggestion then unknowingly install the attacker’s package.

Studies suggest that approximately 19.7% of AI-recommended packages are non-existent, creating a significant supply chain risk for any team using AI coding assistants without verification steps.

| Flaw type | Traditional code | AI-generated code |

|---|---|---|

| Insecure patterns | Introduced by developer error | Reproduced from training data |

| Outdated dependencies | Gradual drift over time | Introduced immediately at generation |

| Supply chain risk | Known package vulnerabilities | Slopsquatting and phantom packages |

| Code complexity | Grows with project age | Can be high from the outset |

| Visibility of flaws | Often detectable by review | Can be obscured by generated complexity |

The numbered steps below outline the specific flaw categories SMBs should be aware of:

- Insecure pattern reproduction: AI models trained on legacy code reproduce authentication flaws, injection vulnerabilities, and weak cryptography.

- Outdated dependencies: AI tools frequently suggest libraries that have known vulnerabilities, simply because those libraries appeared in training data.

- Slopsquatting: Phantom package recommendations create direct supply chain exposure.

- Complexity barriers: AI-generated code can be difficult for human reviewers to audit, slowing down the identification of embedded flaws.

Keeping up with 2026 digital marketing trends means adopting AI tools rapidly, but this must be balanced against robust AI and GDPR guidance to avoid compliance failures when flaws are exploited.

Pro Tip: Run automated dependency scanning on every project that uses AI-generated code. Tools such as OWASP Dependency-Check or Snyk can flag known vulnerabilities in suggested packages before they reach production, and they are free to use at a basic level.

How attackers use AI to exploit security gaps faster

With technical flaws growing, let’s look at how attackers exploit these vulnerabilities with unprecedented speed using AI. The same AI capabilities that accelerate development for your team are available to attackers. The difference is that attackers face no compliance requirements, no governance frameworks, and no ethical constraints on how they deploy these tools.

According to the AI threat landscape report from Trend Micro, attackers leverage AI to automate vulnerability discovery and exploitation, weaponise old CVEs (Common Vulnerabilities and Exposures), and scale phishing and ransomware campaigns at a speed that defenders struggle to match.

Phishing attacks have increased by 1,000% as AI-driven techniques allow attackers to generate highly personalised, convincing messages at industrial scale. For SMBs, this is not an abstract threat. A single successful phishing attack can compromise application credentials, expose customer data, and trigger GDPR breach notification obligations.

AI-powered attacks affecting applications include:

- Automated vulnerability scanning: Attackers use AI to scan exposed applications for known flaws within minutes of deployment

- CVE weaponisation: Old vulnerabilities that were never patched become active targets as AI tools make exploitation trivial

- Credential stuffing at scale: AI accelerates the testing of stolen credentials against business applications

- AI-generated phishing: Personalised emails targeting specific employees with application-related lures

- Ransomware automation: AI streamlines the identification of high-value targets and the deployment of ransomware payloads

“Defenders are playing a slower game. AI gives attackers the ability to find and exploit a vulnerability in your application before your team has even finished the sprint review.”

Projections for 2026 suggest a significant rise in AI-assisted CVE exploitation, with security researchers warning that the time between vulnerability disclosure and active exploitation is shrinking from weeks to hours. Building AI compliance strategies into your operations is no longer optional. Your digital marketing strategy guide may focus on growth, but without secure applications underpinning it, that growth is built on fragile foundations.

Practical steps for closing app security gaps in the age of AI

Understanding these threats, here’s how SMBs can close gaps and protect their apps proactively. The good news is that effective app security does not require an enterprise budget. It requires discipline, the right processes, and a willingness to treat security as part of development rather than an afterthought.

EU SMBs should prioritise AI governance, dependency scanning, vendor assessments, and continuous monitoring, while integrating security directly into the software development lifecycle (SDLC). This is not as complex as it sounds. It means making security checks a standard part of how code is written and reviewed, not a separate exercise that happens once a quarter.

Practical security quick wins for SMBs:

- Enable automated static analysis tools in your development environment to flag insecure code as it is written

- Run dependency scans before every deployment using free tools such as Snyk or OWASP Dependency-Check

- Require two-factor authentication on all application access points and developer accounts

- Conduct quarterly reviews of third-party integrations and API connections

- Establish a clear process for triaging and prioritising vulnerability fixes by risk level

- Review AI-generated code before merging it into production branches

Steps to match AI development speed with security:

- Integrate security into your pipeline: Add automated security scans to your CI/CD (Continuous Integration/Continuous Deployment) pipeline so every code commit is checked automatically.

- Set a vulnerability SLA: Define how quickly different severity levels must be fixed. Critical vulnerabilities within 24 hours, high severity within one week.

- Train your team on AI-specific risks: Ensure developers understand slopsquatting, insecure pattern reproduction, and how to verify AI suggestions.

- Assess your vendors: Any third-party tool or platform you use should be evaluated for its own security posture, particularly AI tools with access to your data.

- Monitor continuously: Use application performance monitoring tools that include security alerting, so unusual behaviour triggers a response immediately.

Linking your master marketing workflow to secure, well-governed applications protects both your customers and your reputation. Exploring AI success strategies for European SMBs should always include this security dimension as a core component.

Pro Tip: Human oversight cannot be replaced entirely by automated tools. Assign a named person in your team to own security reviews, even if that person is not a full-time security professional. Accountability drives action.

A smarter mindset: Why velocity metrics and nuanced governance are vital

Despite the practical steps above, there is a deeper issue that most guides on AI and app security fail to address. The problem is not simply that SMBs lack the right tools. The problem is that most organisations measure the wrong things.

Businesses track development velocity, feature releases, and deployment frequency. Very few track what we would call risk velocity, the rate at which new vulnerabilities are being created versus the rate at which they are being resolved. When you measure only output, you are blind to accumulating risk. When you measure risk velocity, you can see whether your security posture is improving or deteriorating in real time.

Shifting to a risk velocity metric requires AI for detection and patching, but critically, it also requires human oversight to interpret what the numbers mean and act on them. AI tools can surface vulnerabilities faster than ever. But deciding which ones to fix first, understanding the business context of a flaw, and making governance decisions about acceptable risk levels, these remain human responsibilities.

The broader issue for European SMBs is that AI governance frameworks are often treated as compliance exercises rather than operational tools. Ticking a box on an AI risk assessment is not the same as genuinely understanding how AI transforms businesses and where the security implications lie. Real governance means asking hard questions: Who reviews AI-generated code before it ships? What happens when a vulnerability is found in a third-party AI tool? How quickly can we respond?

Researchers studying the AI vulnerability lifecycle note that the window between vulnerability creation and exploitation is narrowing. Governance frameworks built for a slower era are no longer fit for purpose. SMBs that invest in genuine, operational security governance today will be far better positioned than those chasing the latest AI benchmarks.

Take your app security and digital transformation further

The gap between AI-accelerated development and effective app security is real, and it is growing. But it is also manageable with the right partner and the right approach.

At Done.lu, we work with European SMBs to build digital foundations that are both fast and secure. Our AI consulting for SMBs service helps you adopt AI tools with clear governance frameworks, so your team gains the productivity benefits without accumulating hidden security debt. Our web development guide explains how we approach secure, scalable builds, and if you are considering a more tailored solution, our custom web development benefits page outlines how bespoke development reduces the risks associated with off-the-shelf code. Whether you are starting your AI journey or looking to strengthen an existing digital infrastructure, we are here to help you move forward with confidence.

Frequently asked questions

What is security debt and why is it rising with AI?

Security debt refers to unresolved application vulnerabilities that accumulate when fixes are deferred. AI accelerates code creation far faster than remediation capacity grows, so debt compounds rapidly across development teams.

How does AI-generated code increase security risks?

AI-generated code frequently reproduces insecure patterns from training data and introduces complex or outdated dependencies, creating flaws such as slopsquatting and weak authentication that are difficult to detect without dedicated scanning.

What AI-powered attack types should SMBs be most aware of?

Attackers use AI to automate vulnerability exploitation, run mass phishing campaigns, and weaponise old CVEs at a scale and speed that manual defences cannot match without automated monitoring in place.

What can European SMBs do to close app security gaps quickly?

Integrating security into the SDLC, running automated dependency scans, enforcing two-factor authentication, and assigning clear ownership of vulnerability triage are the most effective immediate steps for SMBs with limited security resources.