Workflow automation: A practical efficiency guide for SMEs

April 29, 2026

Harnessing artificial intelligence in business for SME growth

April 30, 2026

TL;DR:

- AI brings competitive advantages but exposes SMEs to high breach costs and regulatory risks.

- The EU AI Act classifies AI systems by risk and offers SME-specific compliance reliefs.

- Implementing layered security frameworks and continuous monitoring safeguards AI operations effectively.

Artificial intelligence is reshaping how European businesses operate, but it is also introducing risks that many small and medium-sized enterprises (SMEs) are not yet prepared to manage. The average cost of a data breach reached $4.88 million in 2024, and that figure does not account for the reputational damage that can permanently erode customer trust. At the same time, regulations are evolving rapidly, leaving many business owners uncertain about what they must do, when they must do it, and how much it will cost. This guide cuts through the confusion by explaining the core security frameworks, the key obligations under EU law, and the practical steps you can take right now to protect your business and build a resilient AI operation.

Table of Contents

- Why secure AI matters for European SMEs

- Decoding the EU AI Act and SME-specific obligations

- Essential frameworks: Secure intelligence and NIST AI RMF demystified

- Common security pitfalls and SME solutions

- Putting secure AI into action: Practical SME roadmap

- Our perspective: Security, innovation and trust — balancing what matters

- Get started with secure AI and digital transformation for your business

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Secure AI is essential | AI breaches can devastate SMEs; robust security ensures trust and business stability. |

| Regulations are evolving | EU law now demands a risk-based, security-by-design approach tailored for SMEs. |

| Frameworks make compliance practical | Integrated frameworks help SMEs manage risk, improve resilience, and meet necessary standards. |

| Layered defences work best | Proactive, end-to-end protections beat simple, bolt-on fixes for lasting security. |

| Practical steps enable action | Inventory, assess, secure, and monitor your AI for legal compliance and operational gains. |

Why secure AI matters for European SMEs

Artificial intelligence promises genuine competitive advantages: faster decision-making, automated workflows, richer customer insights, and lower operational costs. However, these benefits come with a set of risks that are particularly acute for smaller organisations. Unlike large corporations with dedicated security teams and deep compliance budgets, SMEs typically operate with limited resources, which means a single incident can have an outsized impact on the entire business.

Consider the financial exposure first. The average breach now costs $4.88M, a figure that would be catastrophic for most SMEs. Beyond the immediate financial hit, there are regulatory fines, legal costs, and the long-term erosion of client confidence. For businesses in sectors such as legal, finance, healthcare, or accounting, a breach involving client data can effectively end the business.

The regulatory environment is also tightening considerably. The EU AI Act mandates a risk-based approach to AI governance, with prohibited practices and strict requirements for high-risk AI systems, including data governance, risk management, technical documentation, human oversight, and cybersecurity measures. These provisions become fully effective in August 2026, meaning the window for preparation is narrowing fast.

The key risks SMEs face when deploying AI without proper security include:

- Data exposure: AI systems process large volumes of sensitive data, making them attractive targets for attackers.

- Model manipulation: Adversarial attacks can corrupt AI outputs, leading to flawed business decisions.

- Regulatory non-compliance: Failing to meet EU AI Act or GDPR requirements can result in substantial fines.

- Reputational damage: A single publicised breach can undermine years of brand-building.

- Operational disruption: Compromised AI systems can halt core business processes without warning.

“Securing AI is not simply a technical task. It is a business continuity strategy. For SMEs, the cost of inaction consistently exceeds the cost of preparation.”

Exploring secure AI strategies for SMEs early gives your business the time and flexibility to build robust controls before regulatory deadlines arrive. The good news is that proportionate, affordable approaches do exist, and this guide will walk you through them.

Decoding the EU AI Act and SME-specific obligations

The EU AI Act is the world’s first comprehensive legal framework for artificial intelligence, and it applies to any business that develops, deploys, or uses AI systems within the European Union. Understanding its structure is the essential first step for any SME planning to adopt or expand AI capabilities.

The Act uses a risk-based classification system with four tiers:

| Risk tier | Examples | Key obligations |

|---|---|---|

| Unacceptable risk | Social scoring, real-time biometric surveillance | Prohibited outright |

| High risk | Recruitment tools, credit scoring, medical diagnostics | Full governance, documentation, human oversight |

| Limited risk | Chatbots, emotion recognition | Transparency obligations only |

| Minimal risk | Spam filters, AI-assisted content tools | No mandatory requirements |

For most SMEs, the majority of AI tools they use will fall into the limited or minimal risk categories. However, if you use AI for hiring decisions, loan assessments, or any form of automated profiling that significantly affects individuals, you are likely operating in the high-risk tier and must comply with the full set of requirements.

The EU AI Act also provides SME-specific reliefs, which are worth understanding in detail. Small businesses benefit from simplified documentation requirements, priority access to regulatory sandboxes (supervised testing environments where you can trial AI with reduced compliance burdens), and reduced fees for conformity assessments. These provisions were deliberately included to ensure that compliance does not become a barrier that only large firms can afford to clear.

Despite these reliefs, SME adoption barriers remain significant. Skills gaps, limited budgets, and poor data readiness are the three most commonly cited obstacles. The EU’s embrAIsme initiative and the AIAS cybersecurity platform are both working to address these challenges, but businesses cannot wait for external support alone.

Here is a practical sequence for approaching EU AI Act compliance as an SME:

- Audit your current AI use. List every AI tool or system your business uses, including third-party software with embedded AI features.

- Classify each system by risk tier. Use the Act’s categories to determine which obligations apply to each tool.

- Identify your documentation gaps. High-risk systems require technical documentation, conformity assessments, and human oversight mechanisms.

- Review your data governance practices. All AI systems must be trained and operated on data that meets quality and relevance standards.

- Assign responsibility. Designate a person or team accountable for AI compliance within your organisation.

- Engage with your national sandbox programme. If you are developing or significantly customising AI, regulatory sandboxes allow you to test under supervision.

Understanding how AI intersects with your existing data obligations is equally important. Our guide on AI and GDPR compliance for SMEs provides a detailed breakdown of how these two regulatory frameworks interact and what you need to do to satisfy both simultaneously.

Pro Tip: Do not treat the EU AI Act as a separate compliance project from GDPR. The two frameworks overlap significantly, particularly around data minimisation, purpose limitation, and individual rights. Addressing them together saves time and reduces duplication of effort.

Essential frameworks: Secure intelligence and NIST AI RMF demystified

Two frameworks stand out as particularly useful for SMEs seeking to build secure, trustworthy AI systems: the NIST AI Risk Management Framework (AI RMF) and the Secure Intelligence Framework. Neither requires a large technical team to implement, and both provide structured approaches that scale to the size and complexity of your business.

NIST AI RMF: A lifecycle approach to trustworthy AI

The NIST AI RMF was developed by the US National Institute of Standards and Technology and has become a globally recognised standard for AI risk management. It organises AI governance into four core functions:

| Function | What it covers | Practical output |

|---|---|---|

| Govern | Policies, accountability structures, culture | AI governance policy document |

| Map | Context setting, risk identification | Risk register for each AI system |

| Measure | Metrics, testing, evaluation | Performance and safety benchmarks |

| Manage | Prioritisation, response, recovery | Incident response plan |

The framework is designed to ensure that AI systems are valid, reliable, safe, secure, and resilient throughout their entire lifecycle. For SMEs, the most immediately valuable function is often “Map,” because it forces you to think clearly about where each AI system operates, what data it touches, and what could go wrong.

The Secure Intelligence Framework: Security by design

The Secure Intelligence Framework takes a three-layer approach to AI security, covering the data layer, the model layer, and the system layer. Its core principle is that each layer should be designed with the assumption that it could be compromised. This “assume breach” mentality leads to much stronger protections than a perimeter-only approach.

Key protections at each layer include:

- Data layer: Data minimisation (collect only what you need), encryption at rest and in transit, and access controls that limit who can query or modify training data.

- Model layer: Protections against model inversion attacks (where attackers extract training data from model outputs) and data poisoning (where malicious data corrupts model behaviour during training).

- System layer: Endpoint protections, network segmentation, and audit logging to detect and respond to anomalous behaviour.

Open-source tools can make this more accessible than it sounds. Fairlearn helps detect and mitigate bias in AI models, while MLflow provides model tracking and governance capabilities. Both are free to use and can be integrated into existing workflows without specialist knowledge.

Understanding how these frameworks support AI-driven transformation for European SMEs is crucial. Security and innovation are not opposing forces. When built correctly, a secure AI architecture actually accelerates adoption by giving stakeholders the confidence to use AI tools more broadly and decisively.

Pro Tip: Start with the NIST AI RMF’s “Govern” function before anything else. Establishing clear accountability for AI decisions within your organisation creates the foundation that all other security measures depend on.

Common security pitfalls and SME solutions

Even well-intentioned businesses make predictable mistakes when deploying AI. Recognising these pitfalls early can save significant time, money, and reputational risk.

Pitfall 1: Bolt-on security

The most common mistake is treating security as something you add after an AI system is already built or deployed. Bolt-on security is fundamentally fragile compared to layered frameworks like SAIF or AEGIS, which embed controls at every stage of the AI lifecycle. A security patch applied after the fact cannot address vulnerabilities that are baked into the system’s architecture.

Pitfall 2: Insufficient access controls

Many SMEs grant broad access to AI systems and the data they use, either for convenience or because access management feels complex. In practice, every person or system that can access your AI environment is a potential attack vector. Role-based access control (RBAC), where each user is given only the permissions they need for their specific role, dramatically reduces this exposure.

Pitfall 3: No continuous monitoring

AI systems change over time. Model drift (where a model’s performance degrades as real-world data diverges from training data) is a genuine operational risk, not just a technical curiosity. Continuous monitoring and drift detection are critical components of responsible AI governance, yet many SMEs deploy a model and then assume it will continue to perform correctly indefinitely.

Pitfall 4: Underestimating insider risk

External attacks get the most attention, but insider threats, whether through negligence or malice, account for a significant proportion of AI-related incidents. Clear data handling policies, audit logs, and regular access reviews mitigate this risk considerably.

The must-have technical controls for any SME deploying AI are:

- Encryption: All data used by AI systems should be encrypted both at rest and in transit.

- Access control: Implement RBAC and enforce the principle of least privilege.

- Audit logging: Maintain detailed logs of who accesses AI systems and what actions they take.

- Data minimisation: Only collect and retain the data that is strictly necessary for the AI’s purpose.

- Incident response plan: Define in advance how you will detect, contain, and recover from a security incident.

“The difference between a business that recovers from an AI security incident and one that does not is rarely about the sophistication of the attack. It is almost always about whether the business had a plan.”

Exploring the best AI tools for small businesses can also help you identify solutions that have security built in from the outset, rather than requiring you to retrofit protections yourself. And when it comes to customer-facing AI, understanding how to approach boosting digital marketing with AI securely ensures that your marketing operations remain both effective and compliant.

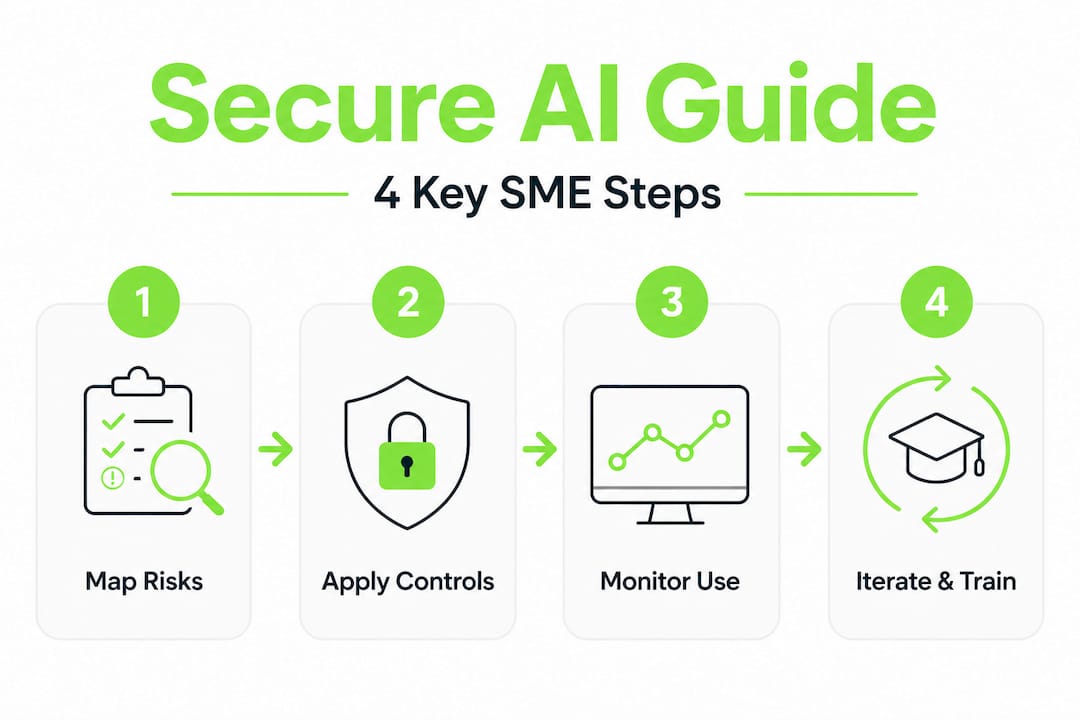

Putting secure AI into action: Practical SME roadmap

Knowing the frameworks and pitfalls is valuable, but what matters most is being able to act. The following roadmap gives you a clear, ordered sequence for implementing secure AI in your business, regardless of your current starting point.

-

Conduct an AI system inventory. Document every AI tool in use across your organisation, including software-as-a-service (SaaS) products with embedded AI features. Note the vendor, the data it accesses, and the business function it supports.

-

Classify each system by risk. Using the EU AI Act’s risk tiers as your guide, determine which systems require the most rigorous controls. Prioritise high-risk systems for immediate attention.

-

Establish a governance policy. Create a written AI governance policy that defines accountability, acceptable use, data handling standards, and incident response procedures. This document does not need to be lengthy, but it must be clear and accessible to all staff.

-

Implement proportionate technical controls. For each AI system, apply the appropriate controls from the Secure Intelligence Framework: encryption, access management, audit logging, and data minimisation. Scale the intensity of controls to the risk level of the system.

-

Train your team. Security controls are only effective if the people using AI systems understand why they matter and how to follow the relevant procedures. Brief, regular training sessions are more effective than annual compliance exercises.

-

Set up continuous monitoring. Establish baseline performance metrics for each AI system and implement alerts for significant deviations. Schedule regular reviews of access logs and model performance data.

-

Engage with EU support programmes. The EU AI Act compliance framework includes provisions for SMEs to access regulatory sandboxes and technical assistance. Make use of these resources, particularly if you are developing or significantly customising AI systems.

Pro Tip: If you are using AI tools for marketing or customer engagement, start your secure AI journey there. These systems typically handle significant volumes of personal data and are often the first to attract regulatory scrutiny. Our resource on using AI tools for digital marketing covers the specific considerations for this context.

The roadmap above is not a one-time exercise. Secure AI is an ongoing practice. As your business evolves, as new tools are adopted, and as regulations are updated, your governance framework must evolve with them. Building a culture of continuous improvement around AI security is what separates businesses that stay ahead of the curve from those that are perpetually catching up.

Our perspective: Security, innovation and trust — balancing what matters

After working with dozens of European SMEs on their AI and digital transformation journeys, we have observed a consistent pattern. The businesses that treat security as a constraint tend to implement it reluctantly and incompletely. The businesses that treat security as an enabler build something far more durable.

Here is the uncomfortable truth: regulatory compliance is the floor, not the ceiling. Meeting the minimum requirements of the EU AI Act will keep you out of trouble with regulators, but it will not, by itself, make your AI systems trustworthy in the eyes of your clients, your partners, or your own team. Trust is built through consistent behaviour over time, through transparency about how AI is used, through prompt and honest responses when things go wrong, and through a genuine commitment to getting better.

Bolt-on security fixes, applied at the last moment before a regulatory deadline, cannot replicate what security-by-design achieves. When controls are embedded from the beginning, they become part of how the system works rather than a layer of friction on top of it. This makes them more effective, easier to maintain, and less likely to be bypassed under operational pressure.

We also see SMEs underestimate the cultural dimension of AI security. Technology controls are necessary but not sufficient. A team that understands why data minimisation matters, why access logs are reviewed, and why model performance is monitored will actively support your security posture rather than inadvertently undermining it. Small, iterative improvements to both technology and culture consistently outperform large, one-off compliance exercises.

Our recommendation is to think of secure AI not as a project with a completion date, but as a permanent operating standard. The businesses that build this mindset now will be better positioned to adopt new AI capabilities quickly and confidently as they emerge, because they will already have the governance infrastructure in place to do so responsibly.

If you are ready to move from principles to practice, our AI consulting for growing SMBs service is designed to guide you through every stage of that journey, from initial audit to full implementation and team training.

Get started with secure AI and digital transformation for your business

Understanding the theory of secure AI is one thing. Putting it into practice in a way that fits your business, your sector, and your budget is where the real work begins. Done.lu has supported over 150 European SMEs through exactly this process, combining strategic AI consulting with practical implementation and ongoing support.

Whether you are just starting to explore AI adoption or you are already using AI tools and want to ensure they are properly secured and compliant, we have resources and services designed for your situation. Our SME AI strategies guide provides a detailed framework for building a secure, scalable AI operation. Our curated overview of top AI tools for SMEs helps you identify solutions that balance capability with compliance. And if your digital foundation needs strengthening alongside your AI strategy, our team can explain the custom web development benefits that support a secure, integrated digital presence. Reach out to discuss your specific needs and we will help you build a clear, actionable plan.

Frequently asked questions

What is the minimum requirement for AI security under EU law?

Businesses must follow a risk-based compliance approach, with stricter rules for high-risk AI systems, including mandatory data governance, risk management, technical documentation, human oversight, and cybersecurity measures effective from August 2026.

What are common mistakes SMEs make with AI security?

Typical errors include relying on bolt-on security measures added after deployment, skipping continuous monitoring, and underestimating insider risks, all of which leave AI systems significantly more vulnerable than layered, security-by-design frameworks would.

How do secure AI frameworks reduce breach risks?

Frameworks such as the Secure Intelligence Framework require layered controls across data, model, and system levels, reducing vulnerabilities at every stage from initial data collection through to live deployment and ongoing operation.

What is a regulatory sandbox for SMEs and AI?

A regulatory sandbox allows small businesses to test AI solutions in a supervised, low-risk environment with reduced compliance burdens, giving SMEs a safe space to develop and refine AI systems before full market deployment.

What open-source tools help SMEs secure their AI systems?

Fairlearn and MLflow are two recommended open-source tools: Fairlearn supports bias detection and mitigation, while MLflow provides model tracking and governance capabilities, both offering cost-effective routes to stronger AI compliance for SMEs.