Top 6 Google Workspace Luxembourg Alternatives 2026

May 15, 2026

TL;DR:

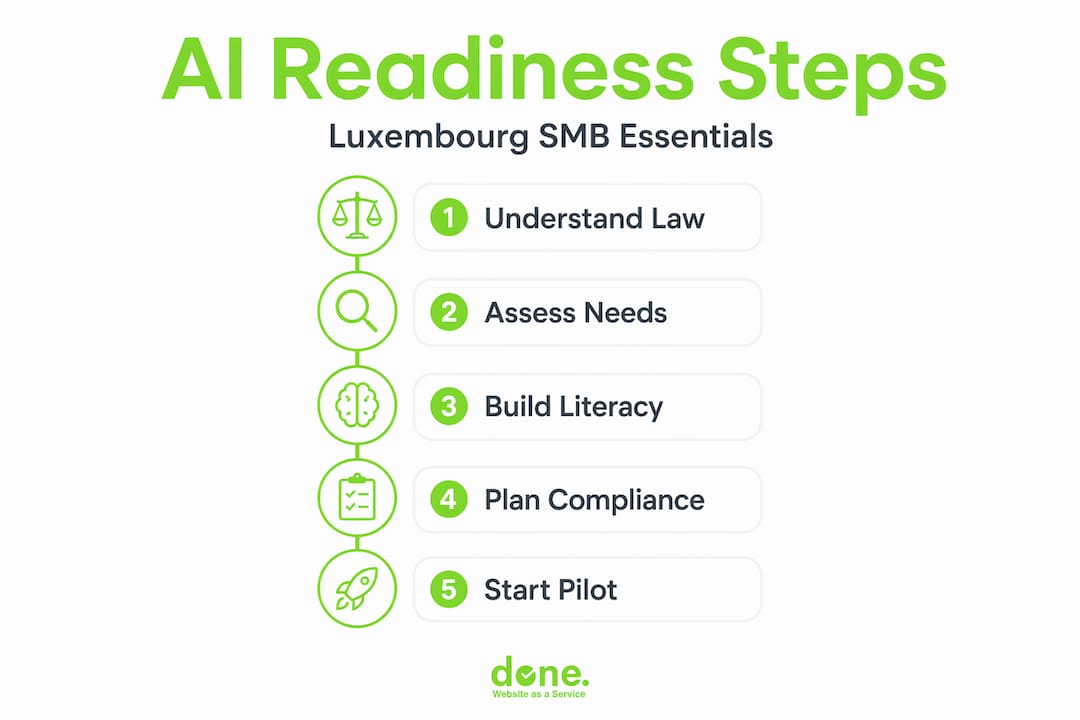

- Many Luxembourg SMEs find AI implementation complicated due to evolving regulations like the EU AI Act and GDPR.

- Leveraging national support programs such as the Luxembourg AI Factory and AI4LUX helps businesses adopt AI responsibly and efficiently.

For many small and medium-sized businesses in Luxembourg, AI implementation feels like stepping into a room where the rules keep changing. The EU AI Act, GDPR obligations, data sovereignty concerns and a fast-moving technology landscape all arrive at once, leaving many owners unsure where to start. This guide cuts through that complexity. We will walk you through the regulatory landscape, the national support available to you, and the practical steps to deploy AI solutions confidently, compliantly, and in a way that genuinely improves how your business operates.

Table of Contents

- Understanding AI requirements and regulations in Luxembourg

- Leveraging national support and structured frameworks for AI adoption

- Preparing your organisation for AI implementation

- Executing and monitoring AI projects responsibly

- Verifying outcomes and iterating for continuous improvement

- Rethinking AI implementation: practical advice from the front lines

- How Done.lu supports your AI implementation journey

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Understand regulation | Grasp the EU AI Act and GDPR requirements early to steer your AI projects legally and effectively. |

| Use national resources | Leverage the Luxembourg AI Factory and AI4LUX campaigns for structured support and funding access. |

| Prepare internally | Ensure data readiness and AI literacy in your team to smooth deployment and compliance. |

| Deploy responsibly | Follow best practices including using CNPD tools and sandboxes to minimise risk and enhance outcomes. |

| Iterate continuously | Measure, review, and improve your AI solutions regularly to sustain benefits and meet evolving rules. |

Understanding AI requirements and regulations in Luxembourg

Before you write a single brief or sign a contract with an AI vendor, you need to understand the legal environment you are operating in. This is not a reason to delay. It is a reason to start informed.

Artificial Intelligence in Luxembourg is governed by a risk-based framework under the EU AI Act, with national compliance coordinated by the CNPD (Commission Nationale pour la Protection des Données). That risk-based approach means the rules you face depend entirely on what your AI system does and how it makes decisions.

Here is how the classification works in practice:

- Unacceptable risk systems (such as social scoring or real-time biometric surveillance in public spaces) are banned outright.

- High-risk systems, for example AI used in hiring, credit scoring, or medical triage, carry the heaviest documentation and transparency requirements.

- Limited and minimal risk systems, which cover most tools SMBs actually use today such as chatbots, email automation, or content generation, require basic transparency disclosures but little else.

The AI Act entered into force in 2024 with phased compliance obligations running through to 2027. The prohibitions on unacceptable-risk systems are already active. Requirements for high-risk systems will apply progressively. That timeline gives you a window to prepare, but it is not an invitation to wait.

Alongside the AI Act, you must account for:

- GDPR requirements around personal data used to train or run AI models.

- NIS2 obligations if your business operates in critical infrastructure or digital services.

- Any sector-specific rules in finance, healthcare, or legal services that layer on top of the general framework.

Pro Tip: Do not treat GDPR and AI Act compliance as two separate checklists. They overlap significantly, particularly around data minimisation, transparency, and individuals’ rights. Handling them together saves time and avoids contradictory policies. Our AI and GDPR guidelines resource is a useful starting point for mapping these obligations together.

The key message here is this: build AI literacy now, even before your first deployment. Understanding the basics of risk classification and data protection positions your business to move quickly once you are ready, rather than scrambling to catch up once regulations are fully enforced.

Leveraging national support and structured frameworks for AI adoption

Luxembourg has invested meaningfully in helping businesses adopt AI responsibly. You do not have to navigate this alone, and you certainly do not have to fund every step from your own pocket.

The Luxembourg AI Factory operates as a one-stop-shop for businesses at any stage of their AI journey. It is organised around four pillars:

- Inspire: Awareness events, real-world use cases, and peer learning opportunities to help you understand what AI can realistically do for your type of business.

- Assess and accelerate: Practical readiness assessments that evaluate your data infrastructure, team capabilities, and process maturity before you commit to any technology.

- Connect: Direct introductions to vetted AI solution providers, academic partners, and technical experts operating within the Luxembourg ecosystem.

- Fund: Guidance on accessing national and EU-level financial support, including grants and co-funding instruments specifically aimed at SME digital transformation.

The AI Factory supports over 150 SMEs with AI adoption services, which means there is already a body of local experience to draw on. You are not the first to ask these questions.

Running alongside the AI Factory, the AI4LUX national campaign takes a broader angle. It promotes responsible AI adoption as both an economic priority and a matter of national sovereignty, with a specific focus on deploying secure, on-site AI within public administration and encouraging private sector equivalents.

Here is a practical comparison of the key resources available to Luxembourg SMBs:

| Resource | Type | Primary purpose | SME applicability |

|---|---|---|---|

| Luxembourg AI Factory | Government programme | End-to-end AI adoption support | High, all sectors |

| AI4LUX campaign | National initiative | Promote sovereign, responsible AI | High, focus on data sensitivity |

| CNPD DAAZ platform | Free e-learning tool | GDPR and AI Act compliance training | High, all staff levels |

| EU AI regulatory sandbox | Testing environment | Safe pilot of new AI systems | Medium, innovation-focused SMEs |

| Luxinnovation | National innovation agency | Funding and project development | High, R&D and digital transformation |

To make the most of these resources, follow this sequence:

- Attend an Inspire event or an AI4LUX briefing to calibrate your expectations.

- Request an assessment through the AI Factory to understand your current readiness level.

- Use the output to build a prioritised roadmap of two or three high-value AI use cases.

- Identify which funding instruments apply to your situation before committing budget.

- Engage a local implementation partner to move from roadmap to working solution.

Pro Tip: Do not underestimate the value of peer connections from the AI Factory’s Connect pillar. Speaking with another SMB owner in your sector who has already deployed a similar tool will tell you more about real-world friction points than any vendor presentation. For a deeper look at how this plays out in practice, our guide on practical AI adoption for SMEs covers the sequencing in detail.

Preparing your organisation for AI implementation

Good AI implementation begins months before any software is installed. The businesses that get the best results are the ones that did the groundwork first.

Identifying your highest-value use cases

Start by listing the tasks in your business that are repetitive, time-consuming, and based on structured data or defined rules. Common examples in Luxembourg SMBs include invoice processing, client communication triage, contract review, and multilingual content generation. Rank these by potential time savings and risk level. Tackle limited-risk, high-frequency tasks first.

Data readiness and GDPR alignment

Before feeding any data into an AI system, work through this checklist:

- Do you know exactly what personal data you hold and where it is stored?

- Do you have a legal basis under GDPR for using that data in an AI process?

- Can you fulfil data subject rights (access, deletion, correction) if your data is used in a model?

- Is your data clean, consistent, and sufficient in volume for the AI task you have in mind?

- Have you reviewed the data handling terms of your chosen AI vendor?

The Digital Omnibus proposal underlines that SMEs should build AI governance early, with documented inventories, risk assessments, and clearly assigned internal responsibilities, rather than retrofitting compliance after deployment.

Building a basic AI governance structure

You do not need a dedicated AI ethics team to do this well. At a minimum, assign:

- One person responsible for overseeing AI tool selection and monitoring.

- A simple register of all AI tools in use, including their risk classification, data inputs, and intended purpose.

- A process for reviewing AI outputs periodically, particularly where decisions affect customers or employees.

Advancing AI literacy across your team

The CNPD’s DAAZ e-learning platform is free and covers both GDPR fundamentals and AI Act requirements in accessible language. Encourage all staff who interact with AI tools to complete it. This is not just a compliance tick-box. Employees who understand what an AI tool can and cannot do will catch errors earlier and use the tools more effectively.

Pro Tip: Integrate your GDPR compliance review and your AI governance planning into the same process. Running them in parallel saves time, avoids duplicated effort, and ensures your documentation does not contradict itself. Our AI consulting for SMBs service is designed around exactly this combined approach.

Executing and monitoring AI projects responsibly

With preparation complete, execution becomes far more manageable. The goal at this stage is controlled deployment, not a big-bang rollout.

Follow this sequence for each AI project:

- Define success clearly: Set measurable targets before you start. For example, “reduce invoice processing time by 40%” or “handle 60% of tier-one customer queries automatically.”

- Run a limited pilot: Deploy the AI tool for one team, one process, or one data set first. This limits risk and generates real evidence before wider rollout.

- Complete a compliance check: Before the pilot goes live, confirm risk classification, data processing records, and user communication requirements are in place.

- Train the team: Ensure every person who interacts with the tool understands its purpose, its limitations, and what to do when it produces an unexpected output.

- Monitor outputs actively: In the first weeks, review AI decisions or outputs manually on a sample basis to catch systematic errors or bias early.

- Document everything: Record configuration decisions, testing results, and any issues identified during the pilot for your governance register.

Here is how AI-augmented monitoring differs from traditional project oversight:

| Aspect | Traditional monitoring | AI-augmented monitoring |

|---|---|---|

| Data review frequency | Weekly or monthly reports | Continuous, real-time dashboards |

| Error detection | Manual review, often delayed | Automated flagging of anomalies |

| Compliance tracking | Periodic audits | Ongoing logging against defined rules |

| Staff involvement | High manual input | Focused on exception handling |

| Iteration speed | Slow, planned cycles | Rapid adjustments based on live data |

The CNPD provides free tools including the DAAZ e-learning platform and hands-on workshops to help your staff stay current with both GDPR and AI Act obligations after deployment. These are not one-off resources. They are updated as the regulatory landscape evolves.

Attending a CNPD workshop after your first AI deployment is one of the most practical things you can do. It will surface compliance gaps you did not know existed and give you direct access to regulatory guidance before those gaps become problems.

For businesses in data-sensitive sectors like legal, finance, or healthcare, national and EU-level AI regulatory sandboxes offer a supervised environment to test new AI systems before full deployment. This allows you to validate compliance and performance without the risk of operating an untested system on live client data.

Pro Tip: Schedule a structured review of your AI tool’s outputs for bias and data accuracy at least quarterly. This is not just good practice. Under the AI Act, it is becoming an expectation for any system that influences decisions about people. Our resource on business automation with AI in Luxembourg includes monitoring frameworks specifically designed for SMBs.

Verifying outcomes and iterating for continuous improvement

Deploying an AI tool is not the end of the project. It is the beginning of a different kind of work: measuring, learning, and improving.

Key performance indicators to track

Start with these practical measures across two dimensions:

- Operational: Time saved per process, error rates before and after AI, volume of tasks handled automatically, staff hours reallocated to higher-value work.

- Compliance: Number of data subject requests fulfilled, incidents logged, governance register up to date, staff training completion rates.

Gathering feedback from users and employees

Your employees who use AI tools daily will notice issues before any dashboard does. Build a simple feedback loop: a monthly check-in, a shared document for logging unexpected outputs, or a short anonymous survey. Act on what you hear. Staff engagement with AI tools increases significantly when people feel their observations are taken seriously.

Follow these steps to keep your AI governance current:

- Review risk classifications annually or whenever the tool’s function or data inputs change significantly.

- Update your data processing records when new personal data categories are introduced.

- Reassess vendor contracts when AI Act obligations evolve, particularly as the 2027 deadline approaches.

- Re-run staff training when new features are introduced to existing tools.

- Document every change and the rationale behind it.

The EU AI Act and Digital Omnibus frameworks explicitly encourage continuous governance, not just point-in-time compliance. Regulators want to see that businesses are actively managing their AI systems over time, not simply obtaining an initial sign-off and moving on.

Iterations do not have to be large to be valuable. Adjusting a prompt template, refining a data input, or adding a manual review step for a specific output type can measurably improve accuracy without disrupting the wider deployment. Small, documented changes accumulate into significant improvements over a six-to-twelve month period.

Pro Tip: Document every iteration, no matter how minor it seems. When it comes to a regulatory audit or a client inquiry about your AI practices, a clear record of what changed, when, and why is far more persuasive than verbal assurances. It also makes optimising AI adoption for efficiency considerably easier when you can track what actually worked.

Rethinking AI implementation: practical advice from the front lines

Here is something we see regularly working with Luxembourg SMBs: businesses that wait for perfect regulatory clarity before starting their AI projects consistently fall behind those that start with the information available today.

The EU AI Act is a phased regulation. The obligations that apply to most SMBs right now are largely about transparency and documentation, not elaborate technical audits. Waiting for every guidance note to be published before you act means your competitors who started earlier will be eighteen months ahead of you by the time you feel comfortable moving. The window of advantage is real, and it is closing.

Realistic timelines matter too. An AI project that takes three months to plan properly is far less painful than one rushed in three weeks that fails six months later. We have seen businesses overcommit to ambitious AI programmes, lose confidence when early results disappoint, and walk away from technology that would have worked with more careful scoping. Treat each project as a contained pilot with a clear success measure, and you will maintain momentum without burning out your team.

The most important mindset shift is this: AI governance should not be a separate workstream running alongside your business. It should be woven into how you already manage projects, vendor relationships, and staff responsibilities. When it sits in its own silo, it gets deprioritised. When it is part of your normal operating rhythm, it becomes self-sustaining.

Collaboration with local partners and government initiatives accelerates this considerably. The Luxembourg AI ecosystem is genuinely well-resourced for a market of this size. Use it. The practical AI adoption steps that work in this market are built on exactly this kind of connected approach, combining internal preparation with external support rather than trying to solve everything in-house.

Pro Tip: Treat AI implementation as a continuous journey with quarterly reviews rather than a project with a defined end date. Businesses that plan for ongoing iteration outperform those that plan for a single deployment, both in results and in compliance readiness.

How Done.lu supports your AI implementation journey

Taking the steps described in this guide is entirely achievable, but it is significantly faster with a partner who knows Luxembourg’s regulatory environment, has implemented AI solutions across multiple sectors, and can translate strategy into working technology.

At Done.lu, we work with Luxembourg SMBs from initial audit through to full deployment and team training. Our AI consulting services for SMBs are built around your operational realities, not generic frameworks. We help you identify the right use cases, structure your governance, and deploy tools that are both effective and compliant. Whether you need business automation with AI services to reduce manual workload, or GDPR and AI compliance guidance to ensure your deployments meet every legal requirement, we bring hands-on experience and a human-first approach to every engagement. Let’s build something that works.

Frequently asked questions

What are the main AI regulations that Luxembourg SMBs must comply with?

Luxembourg SMBs must comply primarily with the EU AI Act and GDPR, with compliance coordinated by the CNPD, ensuring AI systems are safe, transparent, and protective of personal data. Sector-specific rules in finance, healthcare, and legal services may add additional obligations depending on your industry.

How can Luxembourg SMBs access government support for AI projects?

SMBs can access services from the Luxembourg AI Factory, which supports over 150 SMEs with assessments, connections to partners, and funding guidance, alongside the AI4LUX campaign for sovereign AI deployment support. Both are designed specifically for businesses at varying stages of AI readiness.

Is AI literacy important for my business staff?

Yes. The AI Act expects organisations to build AI literacy among staff to support responsible use, and informed employees catch errors and compliance issues far earlier than automated systems alone. Starting with free resources like the CNPD’s DAAZ platform is the most practical first step.

What practical tools help with GDPR and AI compliance in Luxembourg?

The CNPD offers a DAAZ e-learning platform and practical workshops covering both GDPR and AI Act obligations, available free of charge to any business. These resources are regularly updated to reflect the latest regulatory developments, making them reliable for ongoing staff training rather than just initial onboarding.

Can I test AI systems safely before full deployment?

Yes. Luxembourg SMBs can use national AI regulatory sandboxes and the Omnibus proposal expands EU-level sandbox access to support safe testing and innovation under supervised, compliant conditions. Sandboxes are particularly valuable for businesses in sensitive sectors where a failed deployment would carry significant reputational or legal consequences.